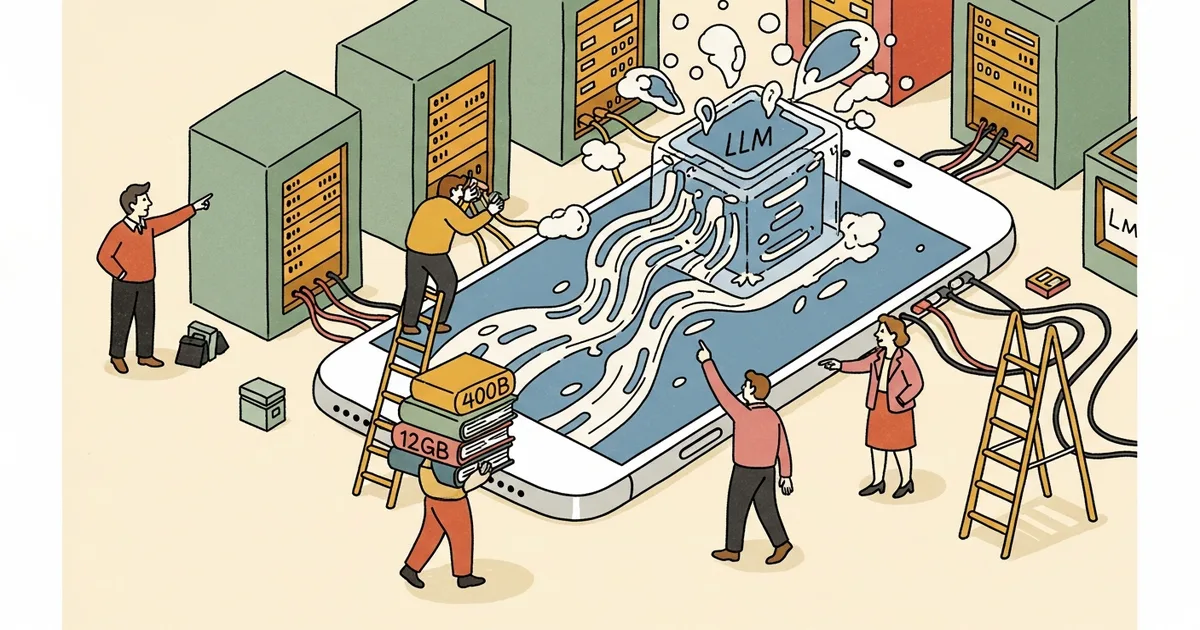

Developers have demonstrated the iPhone 17 Pro successfully running a 400-billion-parameter large language model despite the device having only 12GB of RAM — far below the 200GB minimum typically required for a compressed model of this size. The achievement relies on software optimizations that stream model weights from storage to memory in real time, processing only the layers needed for each inference step rather than loading the entire model at once.

The technique, commonly called offloading or layer-by-layer streaming, treats the device’s flash storage as an extension of RAM. The 400B model’s weights remain on the iPhone’s SSD, and only the active layers — the subset being computed at any given moment — are loaded into the 12GB RAM buffer. Once a layer’s computation completes, its weights are evicted and the next layer’s weights are loaded. This approach trades inference speed for memory efficiency: the model runs significantly slower than it would with full weights in RAM, but it runs at all — which was previously considered impossible on a mobile device.

The practical implications are limited but symbolically significant. A 400B model running via layer streaming on an iPhone produces tokens slowly enough that interactive chat is not viable. However, the demonstration proves that the architectural barrier between mobile devices and frontier-scale models is a software problem rather than a hardware constraint. As Apple’s Neural Engine improves and storage bandwidth increases in future devices, the speed penalty for on-device inference of large models will shrink.

The iPhone 17 Pro and iPad Pro M5 both feature 12GB of RAM, making them the first Apple mobile devices with enough memory to serve as viable platforms for large model inference research. Previous iPhones with 6-8GB of RAM could run models up to approximately 7-13 billion parameters — useful for simple tasks but far below the capability threshold of frontier models. The jump to 400B, even with significant speed compromises, represents a qualitative change in what mobile hardware can theoretically support.

For Apple’s AI strategy, on-device inference of large models aligns with the company’s privacy-first positioning. Running AI workloads locally rather than in the cloud eliminates the need to transmit user data to external servers. If future Apple silicon can run 400B+ models at interactive speeds without offloading, it would give Apple a genuine privacy advantage over cloud-dependent AI assistants — a differentiation that matters to the segment of users who value data sovereignty over raw capability.