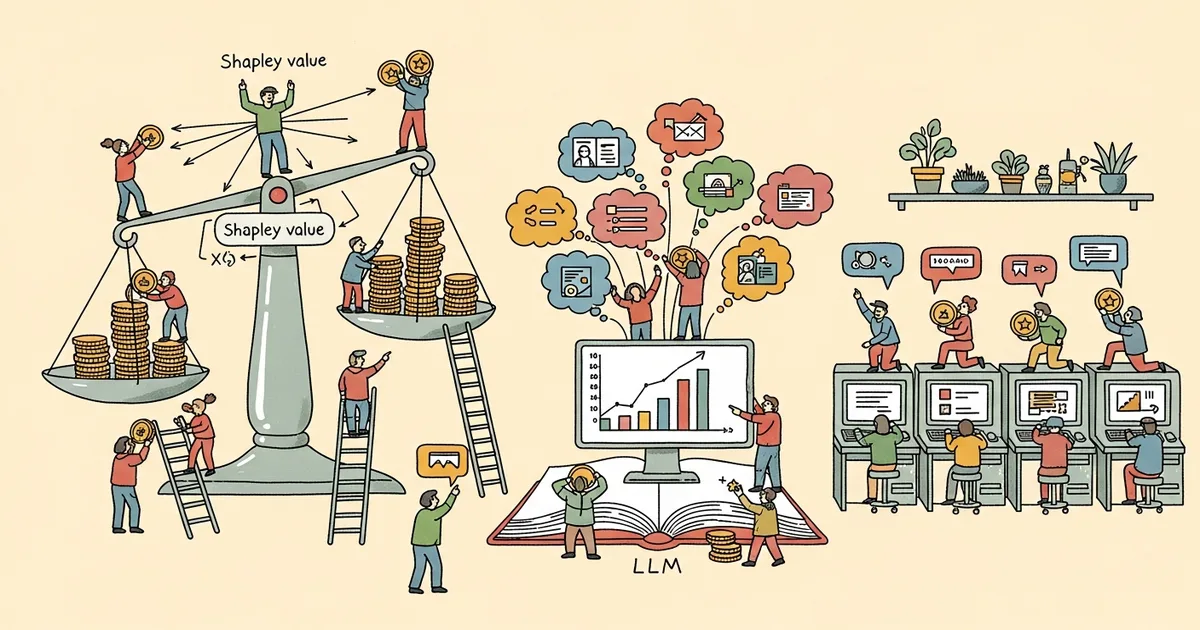

A four-person research team has proposed a targeted modification to Group Relative Policy Optimization (GRPO) that replaces its uniform reward scheme with candidate-specific signals derived from cooperative game theory. The paper, submitted to arXiv on March 31, 2026, addresses a structural flaw in how GRPO handles multi-candidate generation tasks — one that the authors argue causes poor outputs to receive unwarranted positive training signals.

- GRPO assigns identical rewards to all candidates in a generated set, allowing weak outputs to benefit from a single strong peer — a “free-rider” effect that degrades training quality.

- ShapE-GRPO decomposes set-level rewards into individual signals using Shapley values, assigning each candidate a reward proportional to its marginal contribution to the set.

- The method preserves the four classical Shapley axioms — efficiency, symmetry, linearity, and the null player property — and operates in polynomial time.

- Across diverse datasets, ShapE-GRPO outperformed standard GRPO and demonstrated faster convergence during training.

What Happened

Rui Ai, Yu Pan, David Simchi-Levi, and Chonghuan Wang submitted a paper titled “ShapE-GRPO: Shapley-Enhanced Reward Allocation for Multi-Candidate LLM Training” to arXiv on March 31, 2026 (arXiv:2603.29871). The paper proposes a new reinforcement learning post-training method that modifies GRPO’s reward structure to address what the authors call a free-rider problem in multi-candidate generation.

The work targets scenarios where LLMs must produce a set of outputs — recommendations, code suggestions, brainstorming responses — with the goal of maximizing collective utility across the entire set rather than optimizing each output individually. These are common deployment patterns in production AI systems.

Why It Matters

GRPO has become a prominent reinforcement learning post-training technique, used in several high-profile open-source model training runs. Its mechanism involves sampling a group of candidate outputs, computing a reward at the group level, and using those rewards as policy gradient training signals.

The limitation the authors identify is consequential: when GRPO assigns a single scalar reward uniformly to every candidate in a set, a set containing one high-quality output and several low-quality ones will train all of those outputs as equally meritorious contributors. This narrows the signal available to guide the model toward generating better individual outputs and limits exploration during training.

Technical Details

ShapE-GRPO’s core mechanism relies on the permutation-invariant property of set-level utility functions. Because the collective value of a set of recommendations does not change when the order of candidates changes, the authors derive a Shapley value decomposition that cleanly attributes individual contributions without violating the structure of the original reward function.

The Shapley value for a single candidate is its average marginal contribution across all possible orderings of the full candidate set. This gives each output a reward reflecting what it specifically added to collective utility — not what the strongest peer in its group earned. According to the paper’s abstract, the authors “derive a Shapley-enhanced formulation from cooperative game theory to decompose set-level rewards into granular, candidate-specific signals.”

The formulation satisfies the four classical Shapley axioms: efficiency (total reward is fully distributed across candidates), symmetry (identically contributing candidates receive equal rewards), linearity (rewards are additive across utility functions), and the null player property (a candidate that contributes nothing receives zero reward). A key practical concern for Shapley-based methods is computational cost, since exact computation typically requires exponential time; the authors state their formulation achieves polynomial-time complexity.

Empirically, the paper reports ShapE-GRPO “consistently outperforms standard GRPO across diverse datasets with accelerated convergence during training.” Specific dataset names, base model architectures, and numeric benchmark scores are detailed in the full paper but are not included in the abstract.

Who’s Affected

The method is most directly relevant to ML researchers and engineers applying GRPO or similar RL post-training techniques to fine-tune LLMs for multi-output generation tasks. Teams building recommendation systems, code completion tools, and brainstorming assistants would be the primary practitioners if the empirical results generalize.

Co-author David Simchi-Levi is a professor at MIT with a research background at the intersection of operations research and machine learning. His involvement reflects the method’s grounding in cooperative game theory, a field that originated in economics and operations research before finding applications in machine learning attribution problems.

What’s Next

The paper was submitted the day before this article’s publication and has not yet undergone peer review at a conference or journal. The abstract does not specify which base LLMs were evaluated, which reward models were used, or the magnitude of performance improvements over standard GRPO — details that practitioners will need before considering adoption.

The authors claim polynomial-time complexity for their Shapley formulation but do not quantify wall-clock training overhead relative to standard GRPO in the abstract. Independent replication across larger models and broader task distributions will be needed to assess whether the gains are consistent outside the specific experimental setup described in the paper.