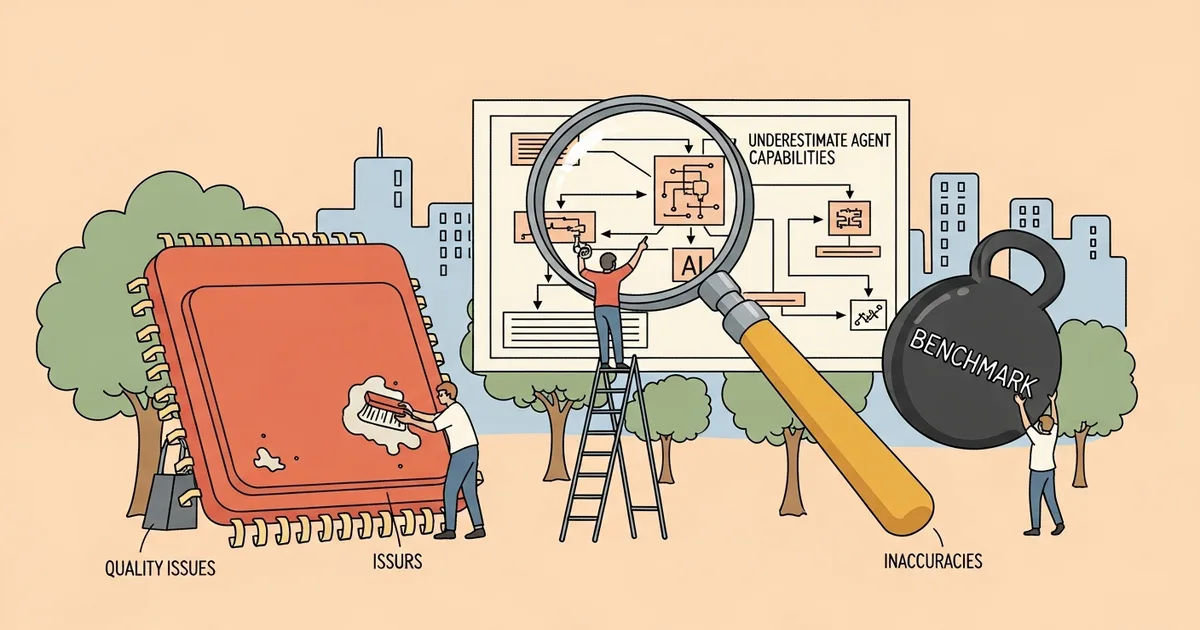

A paper submitted to arXiv on March 31, 2026 found that AI agents tasked with building Extract-Load-Transform (ELT) data pipelines had been systematically underrated on the field’s primary benchmark. The cause was not agent failure but flaws in the benchmark itself — including rigid evaluation scripts, ambiguous task descriptions, and incorrect ground truth that marked correct outputs as wrong.

- The original ELT-Bench contained benchmark-attributable errors — rigid evaluation scripts, ambiguous specifications, and incorrect ground truth — that penalized correct agent outputs.

- A new Auditor-Corrector methodology combining LLM-driven root-cause analysis with human validation achieved Fleiss’ kappa = 0.85, indicating strong inter-annotator agreement.

- When agents were re-evaluated on ELT-Bench-Verified, scores improved significantly — with the authors attributing the gains entirely to benchmark correction, not agent improvement.

- Extraction and loading stages of ELT pipelines are now largely solved by current large language models; transformation tasks remain harder but have improved substantially with newer models.

What Happened

Christopher Zanoli, Andrea Giovannini, Tengjun Jin, Ana Klimovic, and Yotam Perlitz submitted ELT-Bench-Verified to arXiv on March 31, 2026. The paper revisits results from ELT-Bench, the first benchmark for end-to-end ELT pipeline construction, which had previously shown AI agents achieving low success rates — particularly on transformation tasks.

The researchers found that poor benchmark design, not poor agent performance, was responsible for much of those apparent failures. To address this, the team constructed ELT-Bench-Verified: a corrected version of the benchmark with refined evaluation logic and fixed ground truth, released publicly alongside the paper.

Why It Matters

ELT pipelines are foundational to data engineering — they move data from source systems into storage infrastructure and reshape it for downstream use. Automating this process with AI agents has direct practical value, making it a high-stakes evaluation target for researchers and practitioners.

The study’s implications extend beyond ELT. The authors note that their findings echo “observations of pervasive annotation errors in text-to-SQL benchmarks,” suggesting that evaluation quality problems may be systemic across complex data engineering tasks. If benchmarks routinely penalize correct outputs, researchers risk misallocating effort away from capabilities that are further along than benchmark numbers suggest.

Technical Details

The team identified two independent causes of underestimation. First, re-running evaluations with more capable large language models showed that extraction and loading — the first two stages of an ELT pipeline — are now “largely solved,” while transformation performance improved significantly without any changes to the agents themselves.

Second, the team developed the Auditor-Corrector methodology: a pipeline that uses LLMs to identify the likely root cause of each failed task, then routes those findings to human annotators for validation. That human review step achieved Fleiss’ kappa = 0.85, a strong agreement score indicating the audit findings were consistent and reliable across annotators.

The audit identified three classes of benchmark errors: rigid evaluation scripts that rejected structurally correct agent outputs, ambiguous task specifications that gave agents insufficient guidance to determine intended behavior, and outright incorrect ground truth. As the authors write in the paper, these errors “penalize correct agent outputs,” depressing apparent performance on the original benchmark.

Re-evaluating agents on ELT-Bench-Verified produced significant score improvements. The authors state these gains were “attributable entirely to benchmark correction” — not to any change in agent behavior or model capability.

Who’s Affected

Researchers publishing results on ELT pipeline automation benchmarks will need to migrate to ELT-Bench-Verified going forward, as results from the original ELT-Bench are not directly comparable. The corrected benchmark has been publicly released alongside the paper.

Data engineering teams and platform vendors who evaluated AI agents using the original ELT-Bench should treat those results as potentially conservative. Agents that appeared to underperform may have practical capabilities closer to production use than prior benchmark scores indicated.

What’s Next

The authors argue that systematic quality auditing should become standard practice for complex agentic evaluations, not a post-hoc correction applied after anomalous results attract attention. The Auditor-Corrector methodology is presented as a reusable framework applicable to benchmarks beyond ELT.

Transformation tasks remain an open challenge. While ELT-Bench-Verified shows materially improved agent performance after benchmark correction, the paper does not claim that full automation of the transformation stage is solved. The authors identify both further benchmark refinement and continued model development as necessary next steps.