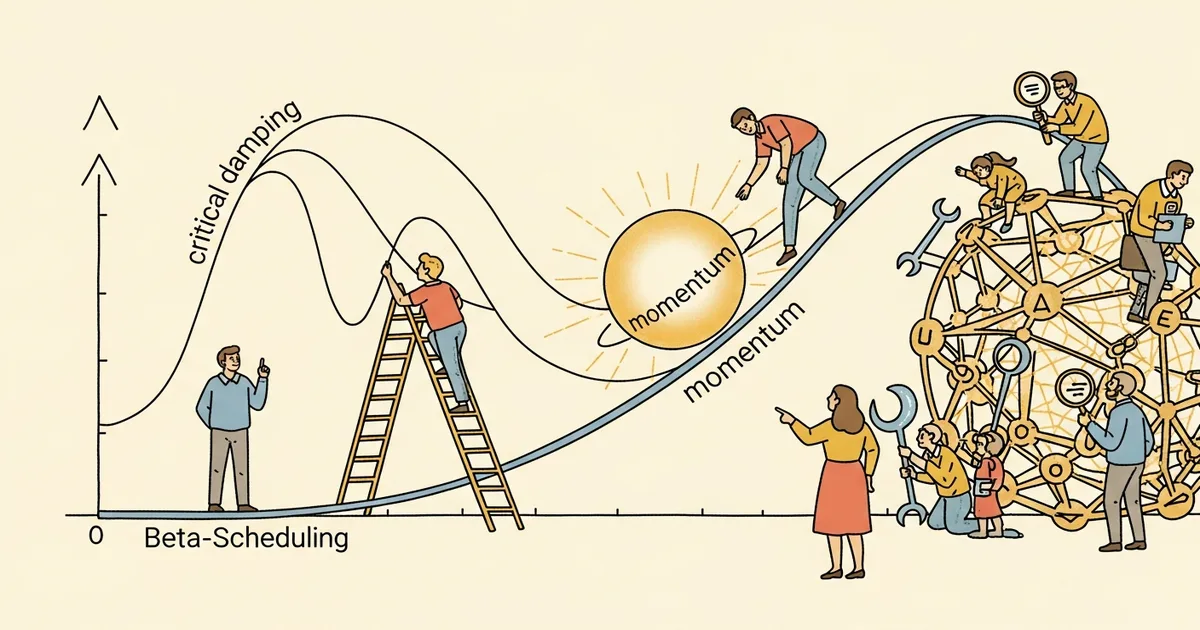

A paper posted to arXiv by Ivan Pasichnyk on March 30, 2026 proposes replacing the standard fixed momentum value used in neural network training with a schedule derived from the critically damped harmonic oscillator. The work frames its primary contribution not as an accuracy improvement but as a diagnostic framework for localizing and correcting specific failure modes in trained models.

- The formula mu(t) = 1 – 2*sqrt(alpha(t)) ties momentum directly to the current learning rate, requiring no additional hyperparameters

- On ResNet-18/CIFAR-10, the schedule achieved 1.9x faster convergence to 90% accuracy compared to standard constant momentum

- Per-layer gradient attribution identified the same three problem layers regardless of whether training used SGD or Adam — a 100% overlap across optimizers

- Targeted correction of those three layers fixed 62 misclassifications while retraining only 18% of model parameters

What Happened

On March 30, 2026, Ivan Pasichnyk posted a paper titled “Beta-Scheduling: Momentum from Critical Damping as a Diagnostic and Correction Tool for Neural Network Training” to arXiv (arXiv:2603.28921), proposing to replace the fixed momentum value of 0.9 — a convention dating to 1964 with limited theoretical justification for its optimality in modern architectures — with a time-varying schedule derived from the critically damped harmonic oscillator. No institutional affiliation was listed in the submission.

Why It Matters

Momentum scheduling has been largely overlooked while learning rate scheduling — cosine annealing, warmup policies, one-cycle rates — became standard practice across PyTorch and TensorFlow training pipelines over the past decade. Pasichnyk argues this asymmetry is physically inconsistent: optimal damping in the harmonic oscillator analogy depends on the current stiffness of the system, not a fixed ratio, so holding momentum constant while the learning rate changes violates the physics of the model.

Prior work on adaptive optimizers such as Adam introduced per-parameter adaptive moment estimates but did not schedule the first-moment decay parameter (beta1) using a physics-derived closed-form expression. Pasichnyk’s derivation fills this gap with a formula that couples automatically to any existing learning rate schedule.

Technical Details

The core formula is mu(t) = 1 – 2*sqrt(alpha(t)), where alpha(t) is the learning rate at training step t, derived from the critical damping condition of a harmonic oscillator — the regime in which a system returns to equilibrium as quickly as possible without oscillating. The schedule requires zero free parameters beyond those already in the learning rate schedule. At high learning rates early in training, the formula produces lower momentum values; as the learning rate decays, momentum rises toward conventional values, increasing optimization memory as the loss landscape flattens.

Tested on ResNet-18 trained on CIFAR-10, the beta-schedule reached 90% accuracy 1.9x faster than constant momentum. A hybrid configuration — physics-derived momentum during early training, then constant momentum for final refinement — achieved 95% accuracy fastest among five methods compared in the paper.

The diagnostic application operates separately from convergence speed. Under the beta-schedule, per-layer gradient attributions were computed and cross-referenced between SGD and Adam training runs. The same three layers were flagged as problematic by both optimizers, a 100% overlap, suggesting the attribution pattern is optimizer-agnostic rather than an artifact of a specific algorithm. As the paper states: “The main contribution is not an accuracy improvement but a principled, parameter-free tool for localizing and correcting specific failure modes in trained networks.”

Applying corrections to only the three identified layers resolved 62 misclassifications in the test set while retraining just 18% of the network’s parameters — a substantially narrower intervention than full retraining or broad fine-tuning.

Who’s Affected

Practitioners training convolutional networks with SGD or Adam can integrate the beta-schedule formula into existing pipelines with minimal changes, since it introduces no new hyperparameters beyond those already required by the learning rate schedule. The targeted correction workflow is most immediately relevant for teams managing deployed models with known, localized error clusters, offering a lower-cost retraining path than broad fine-tuning.

Optimizer researchers may find the physics derivation useful as a foundation for further momentum scheduling work, particularly in training regimes where learning rates vary significantly across stages.

What’s Next

The paper’s experiments are confined to ResNet-18 on CIFAR-10, and Pasichnyk does not test whether the 1.9x convergence speedup or the optimizer-invariant diagnostic properties extend to transformer architectures, larger-scale datasets such as ImageNet, or language model training pipelines. Independent replication across diverse architectures is the necessary next step before broader adoption can be assessed. The zero-parameter formula makes it straightforward to add to existing training scripts for such follow-up tests.