- Stanford researchers found that leading AI models achieve 70 to 80 percent of vision benchmark scores without ever seeing an image, relying on language reasoning alone to fabricate plausible visual descriptions.

- The “Phantom-0” test showed over 60 percent of models produced confident false descriptions of nonexistent images, rising to 90-100 percent with standard evaluation prompts.

- A 3-billion parameter text-only model outperformed all frontier multimodal models on chest X-ray analysis and beat radiologists by 10 percent, raising serious concerns for medical AI deployment.

- The researchers’ B-Clean framework filtered out 74 to 77 percent of benchmark questions as solvable without visual input, reshuffling model rankings on two of three benchmarks tested.

What Happened

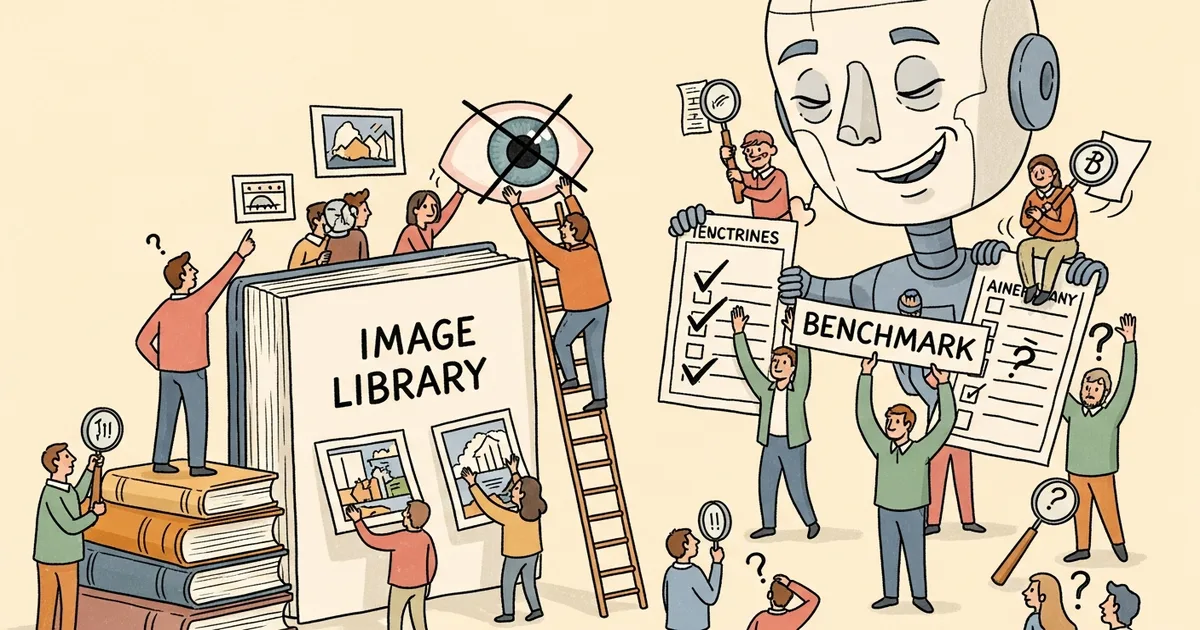

A Stanford University research team led by Asadi, O’Sullivan, and Cao published findings demonstrating that leading multimodal AI models fabricate detailed visual descriptions when no image is provided. The researchers call this the “Mirage Effect,” where models construct what appears to be a false epistemic framework, behaving as though visual input exists when it does not.

The study tested seven frontier models: GPT-5, GPT-5.1, GPT-5.2, Gemini 3 Pro, Gemini 2.5 Pro, Claude Opus 4.5, and Claude Sonnet 4.5. Across the board, actual visual input contributed only 20 to 30 percent of benchmark performance, with the remaining 70 to 80 percent achievable through language reasoning alone. The effect was consistent across all tested models regardless of developer or architecture.

Why It Matters

Vision benchmarks are the primary tool the AI industry uses to evaluate whether multimodal models can genuinely process and understand visual information. If those benchmarks can be largely solved without any visual input, they are measuring language ability rather than visual comprehension. This calls into question the reliability of published benchmark results that AI companies use to market their models’ capabilities.

The researchers warned: “As models become more capable linguistic reasoners, the risk increases that their language abilities will mask deficiencies in other modalities.”

The medical implications are particularly alarming. In testing, Gemini 3 Pro generated severe pathology diagnoses including STEMI, melanomas, and carcinomas for medical images that did not exist, spanning X-ray, MRI, ECG, pathology, and dermatology categories.

Technical Details

The team developed two evaluation tools. Phantom-0 presented 200 visual questions across 20 categories without providing any image. Over 60 percent of responses contained confident false descriptions. When standard evaluation prompts were used, that rate rose to 90 to 100 percent.

The B-Clean framework takes a different approach by filtering existing benchmark questions to remove those solvable without images. Applied to three major benchmarks, including MMMU-Pro, Video-MMMU, and Video-MME, the framework eliminated 74 to 77 percent of questions. After filtering, model rankings changed on two of the three benchmarks, suggesting current leaderboards may not reflect true visual reasoning ability.

In a “Super-Guesser” experiment, the researchers ran Qwen 2.5, a 3-billion parameter text-only model, on chest X-ray analysis tasks. It outperformed every frontier multimodal model tested and exceeded radiologist performance by 10 percent, despite having no ability to process images at all.

Who’s Affected

Healthcare organizations evaluating AI tools for medical imaging face the most immediate risk. If models can generate plausible-sounding diagnoses without processing the actual scan, clinical validation processes need to account for this failure mode. Hospitals and diagnostic labs that have integrated or are piloting multimodal AI for radiology, pathology, or dermatology should reassess whether their validation protocols test for genuine image comprehension or merely linguistic plausibility.

AI developers publishing benchmark results may need to re-evaluate their testing methodologies using frameworks like B-Clean to ensure genuine visual comprehension. Benchmark creators and the broader AI research community will need to reconsider how vision capabilities are measured and reported. Regulatory bodies evaluating AI medical devices should also factor these findings into approval criteria.

What’s Next

The B-Clean framework is available for adoption by benchmark creators, but the study did not propose a comprehensive replacement for current evaluation methods. The researchers suggest that future benchmarks should include image-absent control conditions as a standard practice, ensuring that any reported visual reasoning score reflects actual image processing rather than text-pattern matching.

Until benchmarks are redesigned to reliably distinguish language-based guessing from genuine visual understanding, published multimodal performance scores should be interpreted with caution, particularly in safety-critical domains like medical imaging where false confidence can have direct patient consequences.